Advancements in image generation and manipulation technology offer both opportunities and risks. Detecting manipulated media is crucial to combat misinformation. This report, part of the 'Anomaly Detection' project within generative AI pilot initiatives, aims to understand the strengths and weaknesses of existing detection algorithms. By doing so, the 鲍鱼tv can make informed decisions regarding the integration of these algorithms into the journalistic process.

Our evaluation dataset comprises three image types: fully generated, partially manipulated, and face-altered. Augmented to simulate real-world scenarios (compression, resizing, social media processing), our study focuses on precision, recall, and F1-score metrics, addressing both false positives and false negatives. According to the evaluations conducted for this report in early 2024, the 鲍鱼tv鈥檚 assessment is that none of the deepfake detectors tested perform effectively in detecting all types of deepfake instances.

This paper is authored by 鲍鱼tv Research & Development's Marc Gorriz-Blanch, Woody Bayliss, Sinead O鈥橞rien, Danijela Horak and Juil Sock, with Maryam Ahmed and Blathnaid Healy, our partners from 鲍鱼tv News

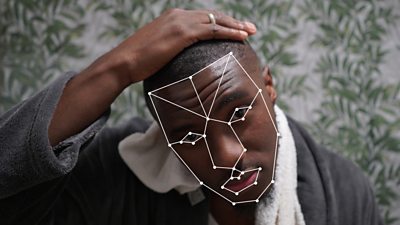

Image by / 漏 鲍鱼tv / / Mirror B /

White Paper copyright

漏 鲍鱼tv. All rights reserved. Except as provided below, no part of a White Paper may be reproduced in any material form (including photocopying or storing it in any medium by electronic means) without the prior written permission of 鲍鱼tv Research except in accordance with the provisions of the (UK) Copyright, Designs and Patents Act 1988.

The 鲍鱼tv grants permission to individuals and organisations to make copies of any White Paper as a complete document (including the copyright notice) for their own internal use. No copies may be published, distributed or made available to third parties whether by paper, electronic or other means without the 鲍鱼tv's prior written permission.